Imbue

We build AI that works for humans

541 followers

We build AI that works for humans

541 followers

Imbue develops tools that help people think, create, and build. We believe technology should be loyal to the user and aligned with human goals.

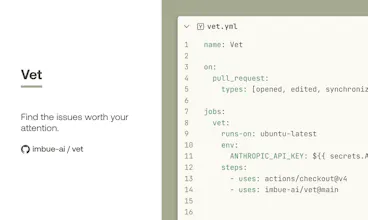

We share many of our tools openly because we believe progress in AI should be collaborative and developer-driven.