Launching today

Luma Agents

Agents that plan, iterate, + refine w/ full creative context

99 followers

Agents that plan, iterate, + refine w/ full creative context

99 followers

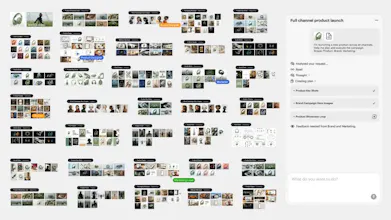

AI agents that plan, generate, and iterate across video, image, and audio in one pipeline. Brand campaigns, product visuals, social ads, video localisation with shared context end-to-end. For creative teams and agencies.

Luma Agents is a creative agent platform that plans, generates, and iterates across video, image, and audio within a single shared workflow.

I'm hunting this because the gap it's addressing is structural, not just a feature gap most AI creative tools are isolated generators, not pipelines.

The problem: Creative teams at agencies and studios are stuck stitching outputs together across tools. Every handoff is a restart. Context gets lost. Scale means adding headcount.

The solution: Luma Agents embeds context across every stage of a project concept to delivery. Agents see what you see, carry that context through video, image, and audio generation, and iterate without you re-explaining from scratch.

What you can do with it:

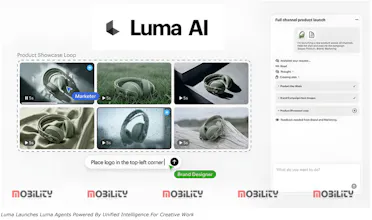

🎬 Run a full brand campaign with cohesive visuals and variants across formats

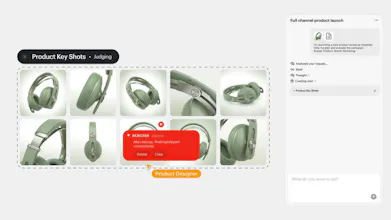

📦 Generate e-commerce product shots lifestyle, hero, on-model in one workflow

📱 Produce short-form video ads with hooks, captions, and platform-specific framing

🌍 Localize videos with natural voiceovers and synced visuals across languages

Who it's for: Creative directors, brand teams, and agency producers who are already using AI tools but spending too much time wrangling outputs between them. Also relevant for solo creators who want to produce at team-level volume.

Time will tell whether teams are going to use this to replace specific tools in the stack or layering it on top of what they already have.

P.S. I hunt the latest and greatest launches in tech, SaaS and AI, follow to be notified → @rohanrecommends

The "shared context end-to-end" angle is what makes this genuinely interesting to me.

Most AI creative tools treat each output as a fresh start — image, then video, then copy, all disconnected. The result is creative that looks like a ransom note: technically competent, visually inconsistent.

Building ad-vertly.ai, we obsessed over this same problem from the advertising side. A campaign should have a through-line: same brand voice, same visual DNA, same audience understanding — whether you're running a static banner or a 15-second video. The moment you break context, the creative stops feeling like a brand and starts feeling like a vendor.

The market that'll love this first: performance marketing teams at agencies where speed-to-creative is the bottleneck. The question is whether the iteration loop is tight enough to replace the current "generate, export, feedback, regenerate" cycle that kills time.

Really excited to see where this goes. Congrats on the launch!

@gaurav_singh91 Keeping a single through-line for voice and visuals across every asset is exactly what Luma Agents are aiming for with Agents. Thanks for stopping by!

mostly layering first - teams dont pull out existing tools until the new one handles edge cases reliably. curious what a full replacement workflow looks like.

@mykola_kondratiuk Totally agree, most teams will layer this in until it proves itself on the edge cases. Thanks for sharing your comment. :)